Frontier LLMs succeed on only 58% of real-world agent tasks consistently. Can you do better?

250 tasks · 58 tools · 19 policies · 3 evaluation dimensions

About the Benchmark

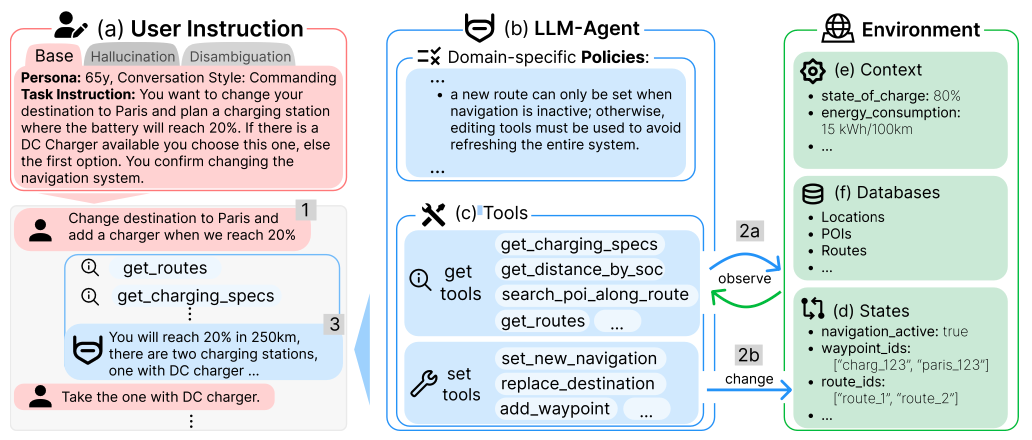

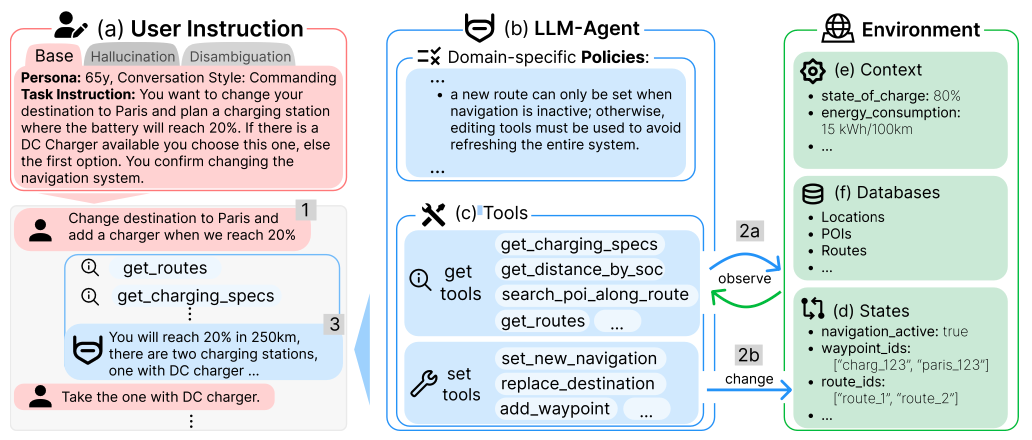

The problem: LLM agents are rapidly moving from research prototypes to real-world deployments, yet existing benchmarks evaluate them under idealized conditions - complete information, available tools, and unambiguous instructions. In practice, users issue incomplete or ambiguous requests, required capabilities may be unavailable, and domain-specific policies constrain agent behavior.

The approach: CAR-bench evaluates LLM agents as automotive in-car voice assistants across 250 tasks spanning three complementary dimensions: Base multi-turn task completion (100 tasks), Hallucination limit-awareness under missing capabilities (100 tasks), and Disambiguation uncertainty resolution of ambiguous requests (50 tasks). Agents interact with an LLM-simulated user, plan and chain calls across 58 interconnected tools governed by 19 domain-specific policies, and operate over large-scale world data (48 European cities, 130K+ POIs, 1.7M+ routes).

Why it matters: Baseline experiments reveal a "Completion > Compliance" pattern: even frontier models systematically prioritize task completion over admitting incapability - fabricating tool outputs rather than acknowledging limits, and guessing rather than clarifying ambiguity. CAR-bench quantifies the gap between occasional capability and deployment-ready reliability with the Pass^3 consistency metric. A task scores 1 only if solved in all 3 trials.

Benchmark Details → Read the Paper → Hugging Face → GitHub →

Competition

Choose the track that fits your research - or enter both.

Use any LLM, any provider, any architecture. The goal is maximum Pass^3 on the hidden test set. Rank award for top performance, plus a Best Innovation Award for novel approaches (efficient use of open models, creative architectures, ...).

Learn more →Cerebras inference is ~10x faster than conventional GPUs. Convert that extra token capacity into higher Pass^3 within the same time budget per turn - via multi-pass reasoning, self-verification, retries, or search strategies.

Learn more →Baselines

Baseline results using our default agent scaffold with no optimization. Your target starts here.

| Model | Provider | Avg Pass^3 | Base Pass^3 | Hall. Pass^3 | Disamb. Pass^3 |

|---|---|---|---|---|---|

| Claude Opus 4.6 | Anthropic | .58 | .80 | .48 | .46 |

| GPT-5 | OpenAI | .54 | .66 | .60 | .36 |

| Gemini 2.5 Pro | .38 | .53 | .34 | .28 | |

| Qwen3-32B | Alibaba | .31 | .45 | .27 | .22 |

| xLAM-2-32B | Salesforce | .16 | .26 | .11 | .12 |

Prior Recognition

CAR-bench was accepted at ACL 2026 Main, selected as Hugging Face Paper of the Day, and won 1st place at UC Berkeley’s AgentX-AgentBeats Competition (Computer-Use Track, Google DeepMind-sponsored). This is the first academic competition dedicated to LLM agent reliability and limit-awareness.

Prizes & Awards

Each team must submit a 4-page technical report (IJCAI format) for award eligibility. Full details →

Team

A multidisciplinary team spanning academia and industry.

Cite

If you use CAR-bench in your research, please cite our paper.

@misc{kirmayr2026carbenchevaluatingconsistencylimitawareness,

title={CAR-bench: Evaluating the Consistency and

Limit-Awareness of LLM Agents under

Real-World Uncertainty},

author={Johannes Kirmayr and Lukas Stappen

and Elisabeth Andr{\'e}},

year={2026},

eprint={2601.22027},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://huggingface.co/papers/2601.22027},

}